How the recommendation system works

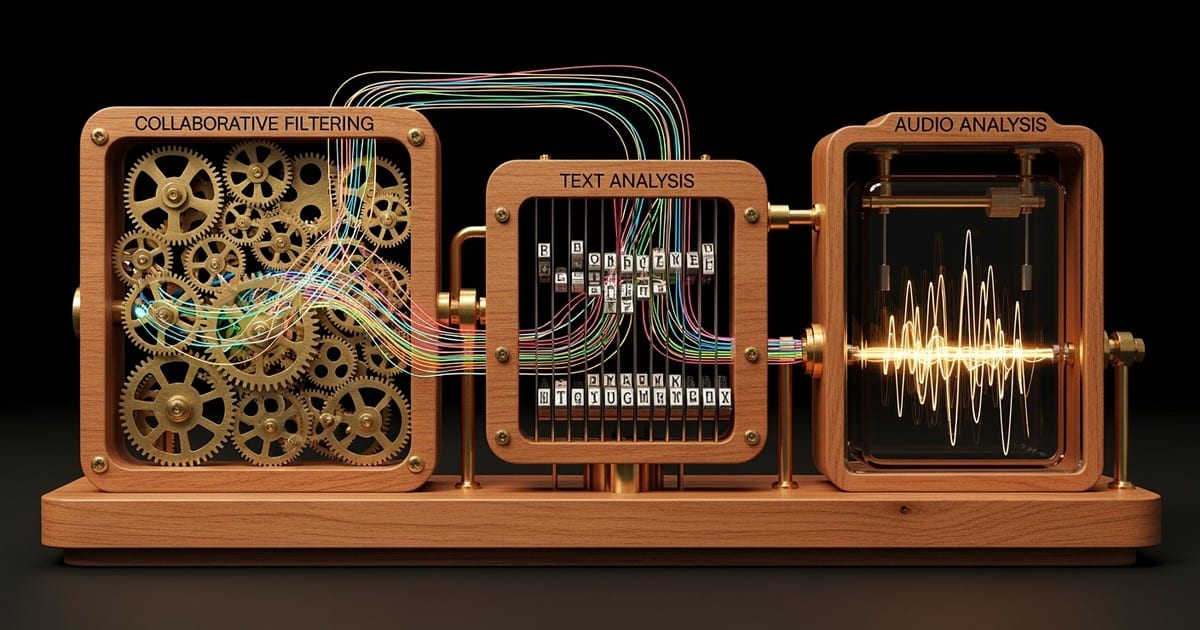

Spotify's recommendation pipeline follows a multi-stage pattern: candidate retrieval narrows millions of tracks to a few thousand, ranking scores those candidates, and re-ranking applies diversity and business constraints before presenting the final set. This architecture runs across Home, Search, playlists, and Autoplay, with separate teams responsible for different surfaces but shared infrastructure underneath.

Three input tracks

Collaborative filtering learns from co-listening and co-saving patterns. When listeners with overlapping tastes save your track, Spotify's embedding models identify similar listeners who have not heard it yet. These embeddings are trained from playlist co-occurrence data and interaction sequences. Think of it as "listeners who saved tracks A and B also tend to save track C."

Text and content understanding has shifted significantly. Spotify now uses large language models as recommendation primitives, not just for understanding playlist titles and artist bios. Their Semantic IDs system represents each track as a sequence of quantized tokens, then fine-tunes an LLM to generate those tokens for tasks like playlist creation and music recommendation. A separate system called Text2Tracks translates natural language prompts directly into track recommendations using playlist-title-to-track training data. This matters because Prompted Playlists lets users describe what they want in words, and your track's metadata and listening context determine whether it matches.

Audio analysis captures raw sonic features: tempo, key, loudness, timbre, and structure. Spotify's generalized user representation framework includes an audio encoder that generates track embeddings directly from audio features, enabling the system to find sonically compatible neighbors even for tracks with limited listening history. Spotify has also published LLark, a multimodal foundation model that combines raw audio with text embeddings for music understanding and reasoning tasks.

Cold start vs catalog

A brand-new release has no behavioral data. Audio embeddings and text understanding handle early scoring. If you pitch through Spotify for Artists at least seven days before release, Spotify guarantees placement in your followers' Release Radar playlists, giving the track a built-in first audience. Once real listeners start saving, replaying, and adding the track to playlists, collaborative filtering takes over and can scale you to new audiences.

Catalog tracks work differently. They already have deep interaction history. The system asks one question: does this song still extend sessions for the listeners it serves? Tracks with ongoing saves and low skip rates can re-enter Radio and Autoplay rotations indefinitely.

Note Spotify's user representations update in near-real time across multiple time scales. There is no published "handover window" where audio signals stop mattering and collaborative signals take over.

Exploration vs exploitation

Spotify frames this tradeoff explicitly. On the Home screen, a Thompson sampling bandit selects content from multiple categories, calibrating the distribution of recommendations to match listener interest while still testing new content. A longer-horizon reinforcement learning model separates "clickiness" (short-term appeal) from "stickiness" (long-term satisfaction), because optimizing only for immediate clicks can misalign with what keeps listeners engaged over weeks.

The practical takeaway: Spotify does not just show users what it already knows they like. It actively tests new music on cohorts likely to respond, then expands or contracts reach based on engagement quality.

Engagement signals that matter

Spotify does not publish signal weights like "saves are worth 3x a stream." But across its creator documentation and research papers, the same behavioral signals appear repeatedly as inputs to personalization.

| Signal | Directional impact | Evidence level |

|---|---|---|

| Save to library | Very high positive | Confirmed: named as algorithm input |

| Playlist add | Very high positive | Confirmed: explicitly listed as algorithm input |

| Complete listen | High positive | Inferred: completion reduces skip signal, aligns with session-length goals |

| Repeat play | High positive | Confirmed: restarts appear in Spotify research features |

| Follow | High positive | Confirmed: used as implicit signal in Spotify's graph models |

| Share / send | Moderate positive | Inferred: plausible but not directly cited in published sources |

| Skip before 30s | Negative | Confirmed: skip rate is used as satisfaction proxy |

The 30-second threshold

A play counts as a stream at 30 seconds. Every skip before that mark costs twice: no stream count and a negative signal to the recommendation system. Spotify's sequential embedding research uses skip rate as a core satisfaction proxy, and their fast/slow interest model tracks skips alongside likes, playlist adds, and restarts.

Warning Skips before 30 seconds register as negative signals. Get to the hook fast, and make sure ad creative sets accurate genre expectations to reduce mismatch-driven skips.

Spotify has not published whether skipping at 5 seconds differs from skipping at 25 seconds in terms of algorithmic weight. Treat the entire sub-30-second window as harmful and focus on reducing it through strong intros and accurate targeting. See the 30-second rule explained for a deeper breakdown.

Session extension

Spotify's north star is session length. Their published reinforcement learning work explicitly models long-term listening engagement, and their cross-surface coordination system optimizes for keeping users in the app. A track that reliably makes someone keep listening gets more chances.

Three patterns create session extension. First, strong opening seconds that prevent early skips. Second, context fit, meaning your track belongs in the mood and tempo range of the playlist or seed it was selected from. Third, follow-on listening, where people play another of your songs or explore your artist page after hearing one track.

This is why playlist adds and shares punch above their weight. They embed your music in a listener's routine, generating repeated context-appropriate plays that feed collaborative filtering over time. Read more in the session extension strategy guide.

How each discovery surface works

Spotify surfaces are not all trying to do the same thing. Each has a specific job, and the algorithm selects placements based on that job.

| Surface | Job | What it rewards |

|---|---|---|

| Discover Weekly | Find new artists matching listener taste | Save rate, playlist adds, collaborative similarity |

| Release Radar | Deliver new music from followed artists | Follows, pitch timing, early saves |

| Daily Mix | Maintain comfort listening loops | Repeat plays, consistent session fit |

| Radio | Keep a session going from a seed | Audio proximity, low skips, long listens |

| Autoplay | Extend listening after a queue ends | Completion rate, low skips, mood fit |

| Smart Shuffle | Test new music inside user playlists | Low early skips, saves from seed audience |

| Daylist | Match the listener's current moment | Time-of-day patterns, recent engagement |

Discover Weekly updates every Monday. In June 2025, Spotify added controls that let listeners choose up to five genre options to guide the vibe, producing a fresh 30-track playlist based on that selection. You cannot submit to Discover Weekly directly. You influence it by driving the upstream signals it uses: follower growth, saves, playlist adds, and strong engagement in relevant listener cohorts.

Release Radar has one confirmed mechanic that matters more than everything else: if you pitch at least seven days before release, your song lands in your followers' Release Radar. This is a hard distribution floor. Follower count directly determines your Release Radar reach. Beyond followers, Release Radar likely uses artist affinity and predicted engagement to order tracks per listener, but Spotify has not published those details.

Daily Mix represents taste clusters, grouping segments of a listener's preferences (workout, focus, commute) into separate mixes that update frequently. This aligns with Spotify's published slow/fast interest modeling, where stable preferences and momentary context combine to shape what appears.

Smart Shuffle mixes personalized recommendations into user-created playlists and Liked Songs. For playlists with more than 15 tracks, Spotify inserts one recommendation for every three tracks. Recommendations are marked with a sparkle icon, and users can downvote to train future mixes. This creates algorithmic insertion opportunities inside listener-owned contexts, which is valuable because playlist adds are one of the signals Spotify's algorithms explicitly consider.

Search is evolving fast. Spotify's agentic search system uses an LLM to interpret queries, route them to specialized retrieval modules, and rank results. The Upcoming Releases hub, added in May 2025, surfaces personalized recommendations based on listening history and integrates Countdown Pages for pre-saves.

For deeper mechanics on each surface, see Spotify algorithmic playlists explained.

The editorial-algorithmic hybrid

Spotify's editorial and algorithmic systems are not separate worlds. Many playlists start with editors creating a track pool, then algorithms personalize the ordering and selection for each listener. Spotify calls these "algotorial" playlists, trained on signals like listening, skipping, and saving. This means editorial placement is both direct exposure and a pathway into algorithmic personalization.

Spotify maintains thousands of editorial playlists curated by a globally distributed team of genre, lifestyle, and culture specialists. Interviews with Spotify's editorial leadership have described a team of 100+ editors worldwide, though Spotify does not regularly publish an official headcount.

The pitch process

You pitch unreleased music through Spotify for Artists. Admins and editors can submit pitches at least seven days before release. The pitch includes structured fields for genre, mood, and culture tags. You can pitch one song at a time, and you can edit your pitch up to release day, though edits are not guaranteed to be seen by editors.

The pitch serves two functions. First, editorial consideration, where a human decides whether to add your track to curated playlists. Second, Release Radar distribution, which is automatic as long as you meet the seven-day window. Strong performance from editorial placement generates behavioral signals (saves, playlist adds, low skips) that increase the probability of algorithmic pickup later, because it provides more interaction evidence for collaborative models.

What artists control

Release timing and pre-saves

Pitch at least seven days before release. This is the single most important tactical requirement in Spotify's discovery system. It guarantees Release Radar delivery and makes your track eligible for editorial review.

Spotify's Upcoming Releases hub surfaces personalized recommendations under Search and highlights top Countdown Pages by pre-save volume. On release day, Spotify sends a push notification to pre-savers and automatically adds the music to their library. Pre-saves concentrate day-one engagement from your most committed listeners, supplying strong early signals during the window when your track has the least behavioral data.

Submit your pitch 7+ days before release Log into

Spotify for Artistsand submit with accurate genre, mood, and culture tags to guarantee Release Radar inclusion and editorial eligibility.Drive pre-saves before release day Coordinate email, social, and ads to build pre-save volume. Pre-savers get a push notification and automatic library add on release day.

Concentrate day-one engagement Retarget people who already showed intent: email subscribers, previous listeners, recent YouTube viewers. Optimize ad destinations for saves and completes, not clicks.

Monitor algorithmic pickup after 72 hours Check the Playlists tab in

Spotify for Artiststo see if you are expanding beyond followers into Radio, Autoplay, or Discover Weekly.

Metadata

Metadata is a routing and context alignment layer, not SEO for Spotify. Clean genre, mood, and instrumentation tags determine which listener contexts your track gets compared against. Consistent artist credits, proper ISRCs, and high-resolution cover art prevent your track from falling into unclassified buckets. The pitch form uses genre, mood, and culture tags as structured fields, and Prompted Playlists matches user language to track metadata, so accuracy here directly affects whether your music appears in user-directed discovery surfaces.

Follower base and cross-platform signals

Followers are directly tied to Release Radar distribution. A larger follower base increases the volume of early qualified listeners, which supplies stronger data for collaborative models. Think of follower growth as a Release Radar reach multiplier.

Spotify's recommendation system emphasizes Spotify-side behaviors: listening, skipping, saving, following, and playlist adds. There is no public evidence that Spotify directly ingests TikTok views or YouTube watch time as ranking features. But cross-platform activity strongly affects Spotify distribution indirectly by driving searches, streams, follows, saves, and playlist adds on Spotify itself. A TikTok viral moment matters because it sends real listeners to Spotify who then generate the engagement signals the algorithm actually reads.

Discovery Mode

Discovery Mode campaigns are configured monthly through Spotify for Artists. There is no upfront budget. Instead, Spotify applies a 30% commission on recording royalties generated from streams of selected songs in Discovery Mode contexts. Other streams remain commission-free. This is one of the few algorithm-adjacent paid levers Spotify documents openly. See how Discovery Mode works for details on campaign timing and setup.

Fraud detection and royalty rules

Spotify has moved aggressively to reduce artificial streaming. Tracks must reach at least 1,000 streams in the previous 12 months to be included in the recorded music royalty pool. Distributors face per-track penalty fees when Spotify flags artificial streaming, with industry sources reporting fees around €10 per flagged track. In the 12 months through September 2025, Spotify removed over 75 million spam tracks from the platform.

Warning Spotify's fraud detection charges distributors per-track fines. Vet all placements and monitor stream sources in

Spotify for Artists.

Detection patterns target unnatural repetition from small account pools, anomalous geography and device patterns, high stream counts with extremely low saves or follows, and coordinated playlist manipulation. The safest approach: use conversion-focused marketing that results in diverse, real listeners with normal engagement behavior. Avoid any promotion that incentivizes "play on loop" behavior, and monitor your stream source breakdown for anything that looks artificial.

What changed in 2025-2026

Several changes in the past year affect how artists interact with Spotify's recommendation system.

| Date | Change | Impact |

|---|---|---|

| May 2025 | Upcoming Releases hub in Search |

Pre-saves now surface in a dedicated discovery tab with push notifications on release day |

| Jun 2025 | Discover Weekly genre controls |

Listeners can steer their mix with up to 5 genre filters, making accurate metadata more important |

| Sep 2025 | AI protection strengthening | 75M spam tracks removed; content farms targeted while legitimate AI use remains allowed |

| Oct 2025 | Taste Profile exclusion | Listeners can exclude one-off listens from influencing their recommendations |

| Nov 2025 | Shuffle "Fewer Repeats" | Default shuffle uses recent listening to reduce repeats, affecting how catalog tracks resurface |

| Dec 2025 | Prompted Playlists beta |

Users type natural language instructions to generate playlists from their full listening history |

| Feb 2026 | Smart Reorder testing | Orders playlists by BPM and key like a DJ set, reported in testing |

The trend is clear: Spotify is giving listeners more control over how recommendations work. Prompted Playlists, genre controls on Discover Weekly, and taste profile exclusions all mean that accurate metadata and genuine listener engagement matter more than ever. Tracks with clear genre, mood, and tempo signals are more likely to match these user-directed surfaces.

Spotify frames AI-generated music as a creative tool, not something to ban. Their policy targets misuse by content farms and bad actors, not AI as a production method. Tracks are treated the same in recommendations regardless of how they were produced.

Measuring progress

Track deltas over absolutes. Build a baseline by release, then aim to beat yourself.

| Metric | What to watch |

|---|---|

| Save rate | saves / listeners is the best way to compare traffic sources and creative quality |

| Skip rate | Focus on pre-30-second skips; lower is better |

| Completion rate | Share of plays that reach the end of the track |

| Playlist add rate | Proxy for long-term resurfacing, since playlist adds are a confirmed algorithm input |

| Algorithmic stream share | Track Discover Weekly, Release Radar, and Radio source percentages over time |

| Follower growth | Directly determines Release Radar reach for your next release |

If one traffic source inflates streams but drags saves and introduces skips, cut it. If a creative reliably lifts saves, roll it out across regions. Use save rate benchmarks and save events as your core KPI stack.

FAQs

Do more streams mean better algorithmic support?

No. If those streams come with high skips and no saves, they hurt. Spotify's research repeatedly uses skip rate as a satisfaction proxy, and their fraud detection targets exactly this pattern: high streams with weak engagement. Quality of interaction matters more than volume.

Do paid ads hurt algorithmic reach?

No. Low-quality traffic hurts. High-intent traffic that saves and completes can train the algorithm in your favor, because collaborative models respond to the quality of interaction, not the acquisition channel.

How does Spotify's per-stream rate compare to other platforms?

Spotify's RPM is usually lower than some major listening platforms, but its algorithmic discovery surfaces can generate far more volume. Compare the current Spotify royalty data with Amazon Music, Apple Music, and YouTube Music and Art Tracks, then model volume and repeat listening separately. A lower RPM with much stronger algorithmic reach can still win.

Does getting on one big playlist guarantee growth?

No, and bad placements can actively hurt. Placement performance governs whether the system expands your reach or pulls back. A mismatched playlist generates skips and sends negative signals. A smaller, well-matched playlist can generate saves that compound through algorithmic pickup.

Does the algorithm favor major labels?

Not on algorithmic surfaces. Editorial playlists are curated by humans who may have label relationships. But Discover Weekly, Radio, and Autoplay rank purely on listener fit and engagement signals. Independent artists with strong engagement regularly appear in algorithmic placements.

Do you need to release on Friday?

Friday aligns with Release Radar refresh and the global chart cycle, but the seven-day pitch window matters more than the release day itself. See our best day to release music data study for more on release timing.